The Future of Voice Assistants: Beyond Alexa and Siri

Voice assistants have undergone one of the most rapid transitions from novelty to utility in the history of consumer technology. In barely a decade, the ability to speak naturally to a device and receive a relevant, contextual response has moved from science fiction to everyday expectation — built into smartphones, smart speakers, televisions, cars, home appliances, and an expanding array of professional environments. Yet for all their visible presence, the Alexas and Siris of today represent only the earliest chapter of what voice technology will become.

The limitations of current voice assistants are well-known to anyone who uses them regularly: they handle a defined range of commands well and struggle with ambiguity, context, and anything outside their training; they are sensitive to accent and background noise; and they rarely maintain coherent context across even a short conversation. These limitations are not accidents of design — they reflect the genuine, profound difficulty of natural language understanding, which remains one of the hardest problems in artificial intelligence and signal processing research.

What makes this moment particularly significant for engineering students is that the technical challenges blocking the next generation of voice technology — contextual AI, edge computing, advanced signal processing, low-power hardware — are precisely the problems being worked on in research labs and engineering departments right now. Students who understand the technology landscape and choose their education accordingly will be among those who build what comes next.

The State of Voice Technology Today

Today’s leading voice assistants — Amazon Alexa, Apple Siri, Google Assistant, and Samsung Bixby — are genuinely capable within specific, well-defined use cases. They can set alarms, play music, control smart home devices, answer factual questions, make calls, and manage calendar entries with reasonable reliability. The commercial success of smart speaker platforms and the integration of voice interfaces into billions of devices demonstrates that voice is a genuinely useful modality for human-computer interaction in the right context.

However, these systems remain brittle in ways that reveal the distance still to travel. They lose context between exchanges, struggle with multi-step reasoning, are easily confused by ambiguous phrasing, and perform poorly with unfamiliar accents or in noisy environments. They also depend heavily on cloud connectivity — sending audio to remote servers for processing — which introduces latency, privacy concerns, and reliability issues in environments where connectivity is limited.

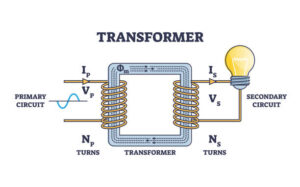

For students studying electronics and communication engineering, the technical architecture behind these systems is directly relevant curriculum content. From microphone array design and acoustic echo cancellation to neural network inference and cloud API integration, modern voice assistant systems span the full range of ECE and computer science disciplines.

The Key Technologies Driving the Next Generation

Large Language Models and Contextual Understanding

The most significant recent advance in voice technology has been the application of large language models (LLMs) — the class of AI system underlying tools like ChatGPT — to conversational interfaces. Unlike earlier natural language processing approaches, LLMs can maintain context across extended conversations, handle ambiguity and implicit references, generate nuanced and contextually appropriate responses, and reason across multiple steps of a problem.

Integrating LLM capabilities with real-time voice input and output — while managing the computational cost and latency involved — is one of the defining engineering challenges of the current moment. The voice assistants of the next generation will feel qualitatively different from their predecessors precisely because this integration is now technically feasible in ways it was not just five years ago.

Edge AI and On-Device Processing

Current voice assistants overwhelmingly rely on cloud-based processing: audio is captured by the device, transmitted to remote servers, processed, and a response is returned. This architecture introduces latency, requires internet connectivity, and raises significant privacy concerns — users’ audio is being transmitted to and stored by technology companies’ servers.

Edge AI — the ability to run sophisticated AI models directly on the local device, without cloud transmission — addresses all three of these issues simultaneously. On-device processing enables faster responses, works in environments without reliable internet connectivity, and keeps sensitive audio data on the user’s own device. Achieving this with the computational and power constraints of consumer hardware is a major engineering challenge requiring advances in both semiconductor design and model compression techniques — exactly the territory of electronics and communication engineering research.

Advanced Signal Processing and Multi-Microphone Arrays

One of the most persistent practical limitations of current voice assistants is their sensitivity to background noise and speaker distance. Multi-microphone array technology, combined with advanced signal processing algorithms for beamforming and noise suppression, addresses this limitation by enabling the device to directionally focus on a speaker’s voice and actively cancel competing audio sources. This technology is already present in premium smart speakers, but its extension to smaller, more constrained devices — earbuds, wearables, embedded systems — requires continued innovation in hardware and signal processing algorithm design.

Emotion Detection and Empathic Response

Voice carries far more information than words alone — tone, pace, pitch variation, and stress patterns all convey emotional state and communicative intent. Future voice assistants will increasingly incorporate the ability to detect and respond to these paralinguistic signals, enabling interactions that are more natural, contextually appropriate, and genuinely useful in emotionally complex situations. This capability has significant applications in healthcare, mental health support, education, and customer service.

Ambient and Embedded Voice Interfaces

The smartphone and dedicated smart speaker are not the final home for voice assistant technology. The next major phase of deployment is ambient — voice interfaces embedded invisibly in glasses, earbuds, workspaces, vehicles, retail environments, and public infrastructure, available without a visible dedicated device. This vision requires miniaturised, energy-efficient hardware capable of continuous, always-on speech detection and processing — a formidable hardware engineering challenge that will occupy ECE researchers and engineers for years to come.

Career Opportunities in Voice Technology

The engineering skills relevant to voice assistant development span a wide and growing range: digital signal processing, machine learning and neural network design, embedded systems engineering, low-power hardware design, software systems integration, and human-computer interaction design. This interdisciplinary scope means that graduates from strong electronics and communication engineering backgrounds are well positioned for roles in this space — particularly those who have developed complementary skills in AI and software alongside their core hardware and signal processing knowledge.

The industry investing in voice technology is substantial and growing. Semiconductor companies are designing specialised AI accelerator chips for on-device processing; consumer electronics companies are embedding voice interfaces into an expanding range of products; healthcare technology companies are exploring voice as an accessibility and diagnostic tool; and automotive companies are building voice interaction into the in-vehicle experience. Graduates from the best engineering colleges in Bangalore who develop relevant skills in signal processing and AI will find strong demand across all these sectors.

Why ECE Students Are Particularly Well Positioned

The technical problems that define the frontier of voice technology — noise cancellation, energy-efficient inference, hardware-software co-design, low-latency signal chains — are problems that a rigorous ECE education addresses directly. Students who combine the hardware fundamentals, signal processing theory, and mathematical depth of a good ECE programme with practical exposure to machine learning and software development will find themselves at the exact intersection of disciplines where voice technology development happens.

Among engineering colleges in Bangalore, those with strong research cultures, modern signal processing and embedded systems laboratories, and active industry collaborations are the ones best equipped to prepare students for careers at this frontier. The presence of major technology companies and active research institutions in Bangalore creates genuine opportunities for students who seek out the right environment.

Frequently Asked Questions

1. What engineering backgrounds are most relevant to voice assistant development?

Electronics and communication engineering, computer science with a signal processing focus, and electrical engineering are the most directly relevant. Machine learning knowledge is increasingly essential across all these disciplines for roles in this space.

2. Will voice assistants eventually replace typed and touch interfaces?

Not entirely — typed input remains faster and more precise for many tasks, and visual interfaces are essential in many contexts. But voice will become the dominant interface in hands-free, accessibility-focused, ambient, and embedded computing contexts, representing a very large and growing share of human-computer interaction overall.

3. What is edge AI, and why does it matter for voice technology?

Edge AI refers to running artificial intelligence models locally on a device rather than sending data to remote cloud servers. For voice assistants, it enables faster responses, works without internet connectivity, and keeps sensitive audio data private — all significant practical advantages.

4. Is a career in voice and AI technology stable over the long term?

The underlying skills — signal processing, embedded systems, neural network design — are foundational and broadly applicable across many domains beyond voice technology specifically. Engineers with these competencies will find sustained demand regardless of how specific product categories evolve.

5. How do engineering colleges in Bangalore prepare students for AI-related careers?

The strongest programmes integrate AI, machine learning, and signal processing content into the core ECE curriculum, provide access to research laboratories and live projects, and maintain active partnerships with industry — giving students practical exposure to the problems they will encounter professionally.